What Training ML Models Taught Me About Being Human

Starting my first semester in Georgia Tech's OMSCS program, I braced for a tidal wave of math. What I wasn't bracing for was discovering that Machine Learning concepts aren't just technical, they're a shockingly precise new language for the human condition.

It's wild how the logic of code can hold a mirror up to our beautiful internal chaos (...when it's not busy trying to sell us more shoes, anyway).

At the end of the semester, I realized:

We're all just incredibly complex models, built on 3.5 billion years of evolutionary code with zero documentation, trying to optimize for happiness in a loss landscape we barely understand. The algorithm doesn't make life easier. But it makes it forgivable. And in the end, maybe that's the only optimization that matters.

Here are a few more things learning about models reveals about being human.

1. Local Minima: The Comfort of "Good Enough"#

There's a universal human experience of being "stuck in a rut." The "good enough" job, the "fine" relationship, the comfortable belief system. It's not perfect, but it's safe, and the thought of leaving is terrifying. Why? Because any first step in a new direction, quitting the job, having the hard conversation, often feels worse before it gets better.

In Machine Learning, there's a perfect term for this: a "local minimum."

When a model is learning, it's like a hiker trying to find the lowest valley in a massive, fog-covered mountain range. A "local minimum" is a "good enough" valley. From the hiker's limited perspective, it looks like the bottom. Any small step in any direction is up. So the model stops, convinced it has found the answer, even though a much deeper, better valley might be just over the next hill.

ML models use "momentum" or "randomness" to jolt themselves out of these local minima. For humans, this might be travel, a new hobby, or a difficult conversation, anything that introduces enough energy to climb out of the comfortable valley.

It's a technical justification for courage, the algorithm for why sometimes "good enough" isn't enough.

2. The War in Our Minds: Bias vs. Variance#

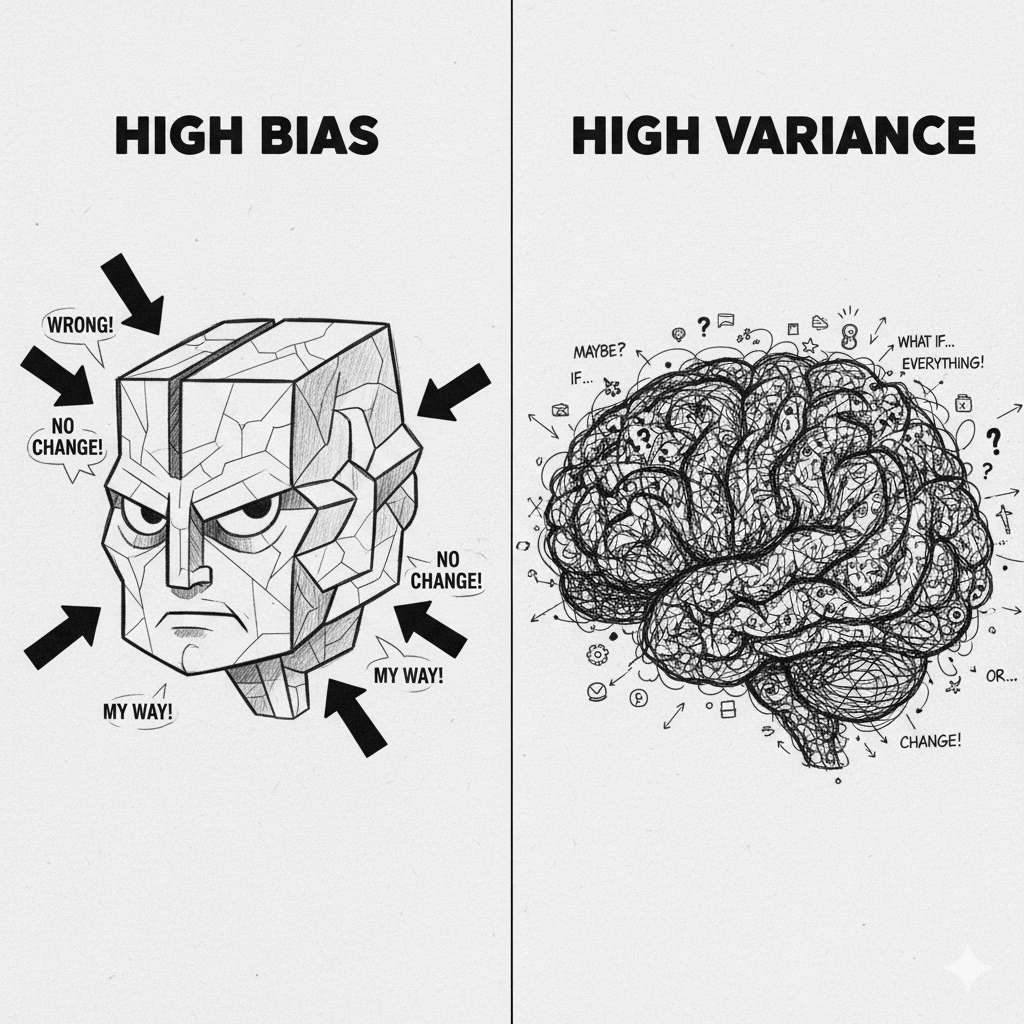

Human minds often fight a constant war on two fronts. On one side, there's the tendency to make lazy, biased, sweeping assumptions (stereotypes, simple ideologies). On the other, the temptation to overthink every tiny, meaningless detail (late-night anxiety, replaying one awkward conversation).

ML has a name for this exact war: Bias vs. Variance.

High Bias (Underfitting): The "lazy assumption" model. It's too simple. It's fast, but it's wrong about individuals. It's the "fast thinking" heuristic that psychologist Daniel Kahneman built a career on.

High Variance (Overfitting): The "anxious overthinking" model. It's so obsessed with every tiny data point from the past that it "memorizes the noise" instead of learning the general pattern. It's what happens when someone is so haunted by one past failure that they can't form a stable, general principle to live by.

Wisdom, then, isn't an "answer." It's the exhausting, lifelong art of balancing these two, knowing I'll get it wrong, over and over, on both sides.

3. The Courage to Prune (Regularization)#

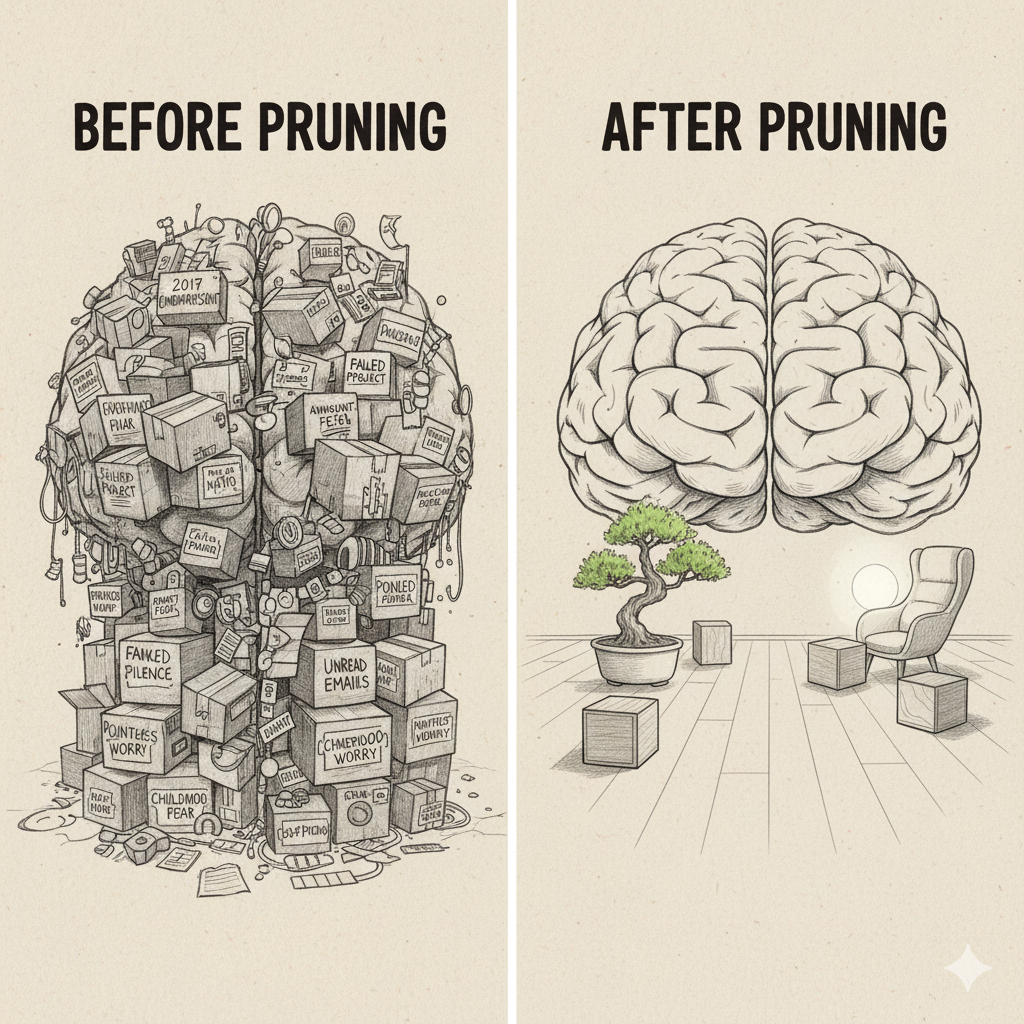

So how do you fix that "anxious overthinking" model?

In ML, you use a technique called "regularization." It's a way to punish a model for being too complex. Some of the most powerful types of regularization work by forcing the model to "zero out" the importance of factors that aren't really important (like that one weird outlier from 2017 that the model is obsessed with).

It's an algorithm for forcing the model to prove that a belief is valuable, or else it's zeroed out. Gone.

This is the real "art of letting go." It's not a passive fading; it's an active, painful, necessary pruning. There's a common assumption that learning is about adding, more facts, more experiences. But ML shows that a key part of growth is pruning.

It's the courage to look at a grudge or a fear and say, "You are no longer predictive of my happiness. Your weight is now zero. Good riddance."

4. The Illusion of the "Individual Self" (Transfer Learning)#

There's a romantic cultural myth of the "self-made" individual who learns everything from a blank slate (a concept that was probably invented to sell more biographies).

In ML, you almost never teach a model from a completely blank slate. The most powerful technique is "transfer learning." You take a massive model that Google or OpenAI spent millions training on the entire internet, and you just "fine-tune" its last few layers on your specific problem.

This concept is a haunting one. The "I" is not a blank slate. It is a 3.5-billion-year-old evolutionary model, "pre-trained" on survival, fear, and belonging. Then, it was "fine-tuned" for a few decades by a specific family, culture, and set of experiences.

On one hand, it's humbling. Our "brilliant" insights are probably just small tweaks on a massive model built by others. But it's also incredibly powerful. It means we can "download" the "pre-trained models" of history by reading books (or arguing on the internet, which is just a very, very noisy fine-tuning dataset).

It suggests our personality isn't a static thing; it's a model in a constant state of fine-tuning. And it means that nothing is truly wasted, every "failed" career or relationship was just pre-training for the next task we haven't even seen yet.

The Reframe#

These concepts don't just describe code. They describe us with an uncomfortable precision that philosophy has been circling around for centuries.

There's something profound about viewing human behavior through this lens. The harsh self-judgment that plagues so many of us starts to make less sense. Not because the struggle disappears, but because we can see it for what it is: the predictable behavior of a learning system doing exactly what learning systems do.

We are models in training.

Like any model worth building, we are:

- Stuck in local minima when we convince ourselves that "this is just how things are"

- Exhibiting high variance when we spiral over one comment, one glance, one memory

- Showing high bias when we flatten complex humans into comfortable stereotypes

- Desperately in need of regularization when we carry grudges that no longer serve us

- Constantly transfer learning from every book, conversation, and heartbreak

This parallel reframes suffering itself. That feeling of being "stuck"? It's not a character flaw. It's a convergence problem. That anxiety that replays every mistake? It's not weakness. It's overfitting to noise. That bias we're all fighting? It's not evil. It's our brain's computational shortcut to survive a world with infinite variables and finite time.

This doesn't excuse anything. But it does something more important: it removes the shame.

If you've had similar experiences learning ML/AI (or anything technical that unexpectedly became personal), I'd be curious to hear about it. Drop me a note or share your thoughts.

Fatma Ali

Software Engineer specializing in React, TypeScript, and Next.js

Related Articles

5 Open-Source MCP Servers That Actually 10x Your GitHub Copilot Workflow

Stop copy-pasting context between tabs. These 5 MCP servers give GitHub Copilot superpowers: semantic memory, live documentation, smart research, browser automation, and structured long-term memory.

The End of 'Just Chat': Why the Future of AI is Multimodal

We've mastered 'prompt engineering.' Now it's time for 'interface engineering.' Why the future of AI isn't just smarter chat, it's a multimodal workspace built on Text, UI, and Artifacts.